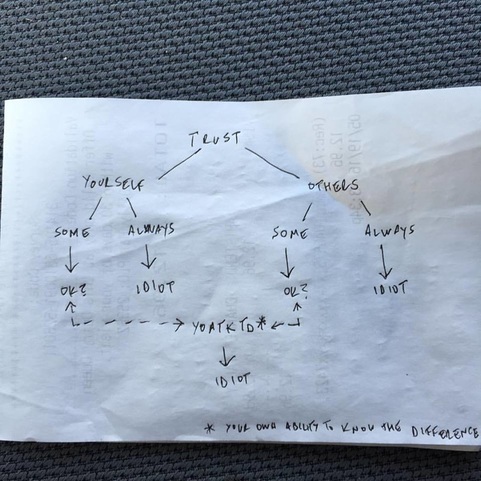

How does one avoid being an idiot in the analysis and conclusions drawn from information gathered? Do not trust anything that one cannot confirm for oneself, right? Under such a premise, one must trust one’s own ability to discern information and draw appropriate conclusions. If one trusts one’s own abilities to filter through multiple points of data and infer correctly from the information drawn from, how consistently can the self-source evaluation be trusted? If one trusts one’s own self some/most of the time this may only be a safe bet some of the time at best. If one trusts one’s own self always, one can safely self diagnose one’s own self as an idiot. If one does not want to be an idiot by way of self trust, then one may conclude that they should seek out the input and knowledge of others. When wise counsel is sought, one must still, at some point, rely upon one’s own internal discernment abilities to determine whether the analysis performed by others is reliable. Even smart and reliable people have limitations and can be wrong from time to time, so no one person can be trusted 100% of the time. If one trusts others some/most of the time this may only be a safe bet some of the time at best. If one trusts others always, one can safely be diagnosed by others as an idiot. The only option that remains is a hybrid of the previously discussed options, inform one’s self while learning when to trust the input of others. Yet the paradox continues, can one trust what we ubiquitous analysts call the YOATKTD metric – Your Own Ability To Know The Difference. As it turns out, in the effort to avoid being an idiot, the only options are to trust one's self or to trust others and in the final analysis one very likely will find that there is little than anyone can do to avoid it.

0 Comments

Leave a Reply. |

AuthorThoughts on personal and professional development. Jon Isaacson, The Intentional Restorer, is a contractor, author, and host of The DYOJO Podcast. The goal of The DYOJO is to help growth-minded restoration professionals shorten their DANG learning curve for personal and professional development. You can watch The DYOJO Podcast on YouTube on Thursdays or listen on your favorite podcast platform.

Archives

March 2023

Categories

All

<script type="text/javascript" src="//downloads.mailchimp.com/js/signup-forms/popup/unique-methods/embed.js" data-dojo-config="usePlainJson: true, isDebug: false"></script><script type="text/javascript">window.dojoRequire(["mojo/signup-forms/Loader"], function(L) { L.start({"baseUrl":"mc.us5.list-manage.com","uuid":"b9016446bd3c6a9f0bd835d4e","lid":"83282ffb9e","uniqueMethods":true}) })</script>

|

Jon Isaacson |

Connect. Collaborate. Conquer.

© COPYRIGHT 2015. ALL RIGHTS RESERVED.

|

RSS Feed

RSS Feed